Same data, two interfaces.

Scripts talk to rp over a Unix socket. Humans get a GUI with a dashboard, a topic monitor, and a bag recorder.

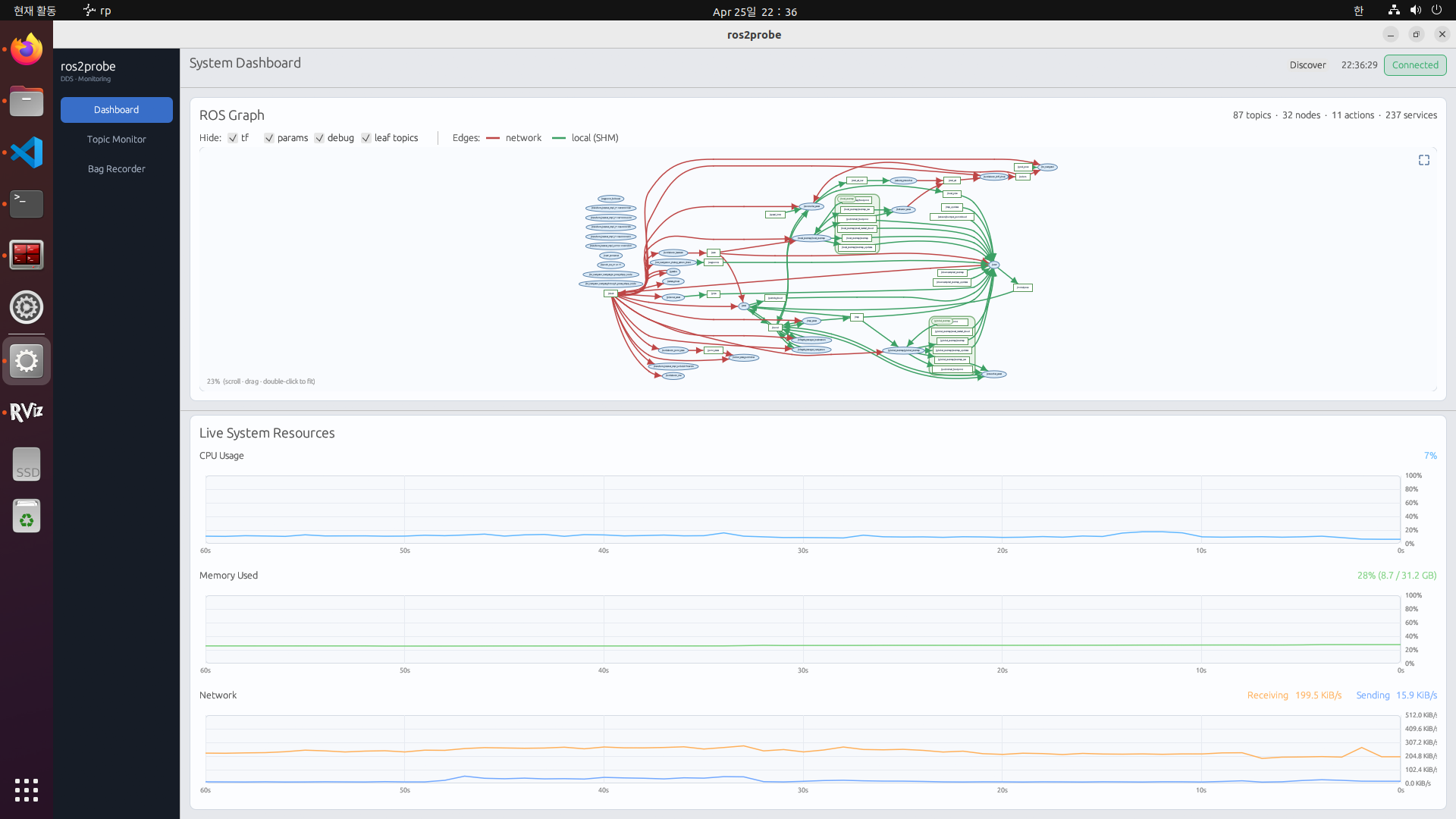

rp gui → Dashboard

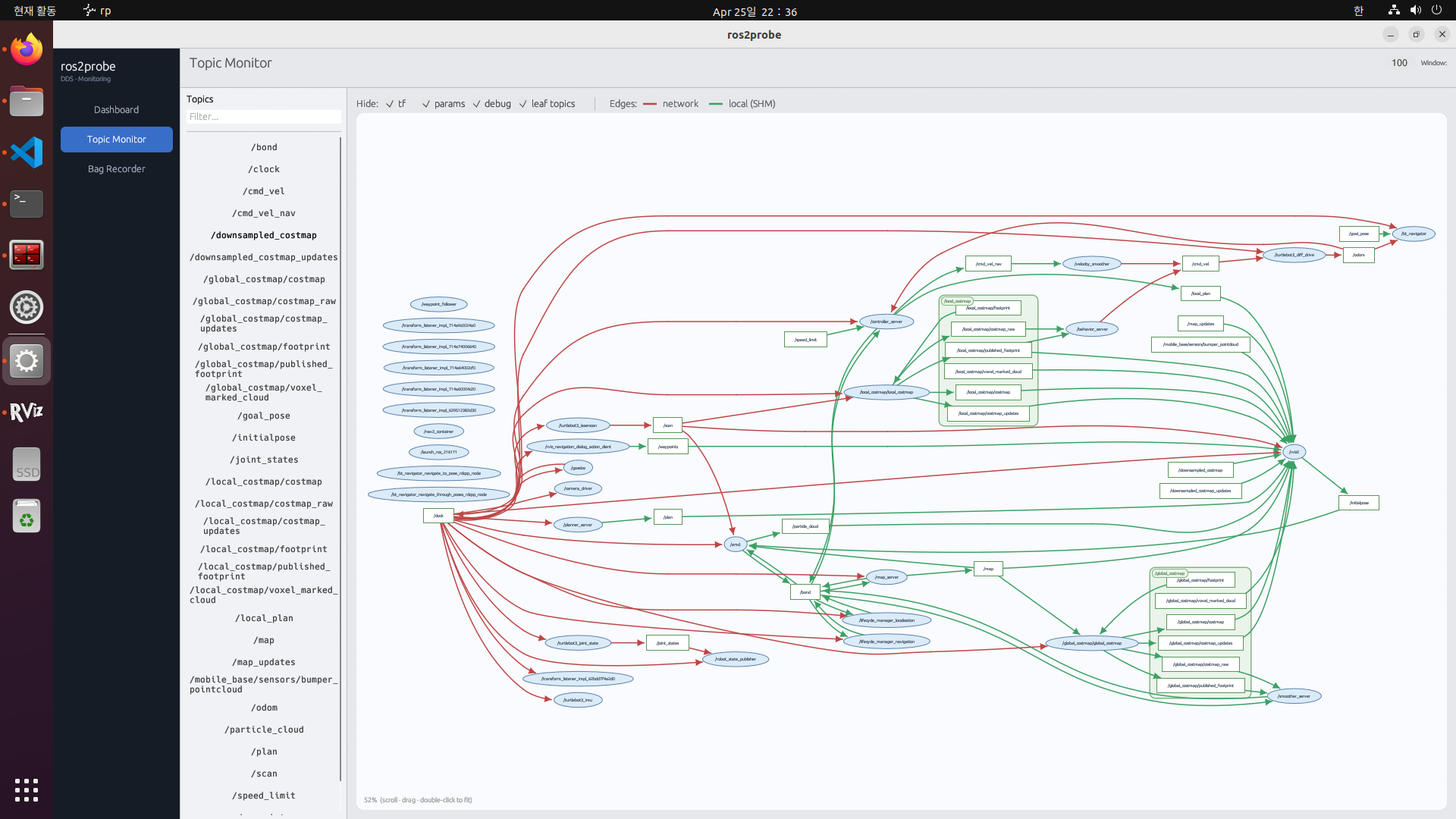

rp gui → Topic Monitor 1

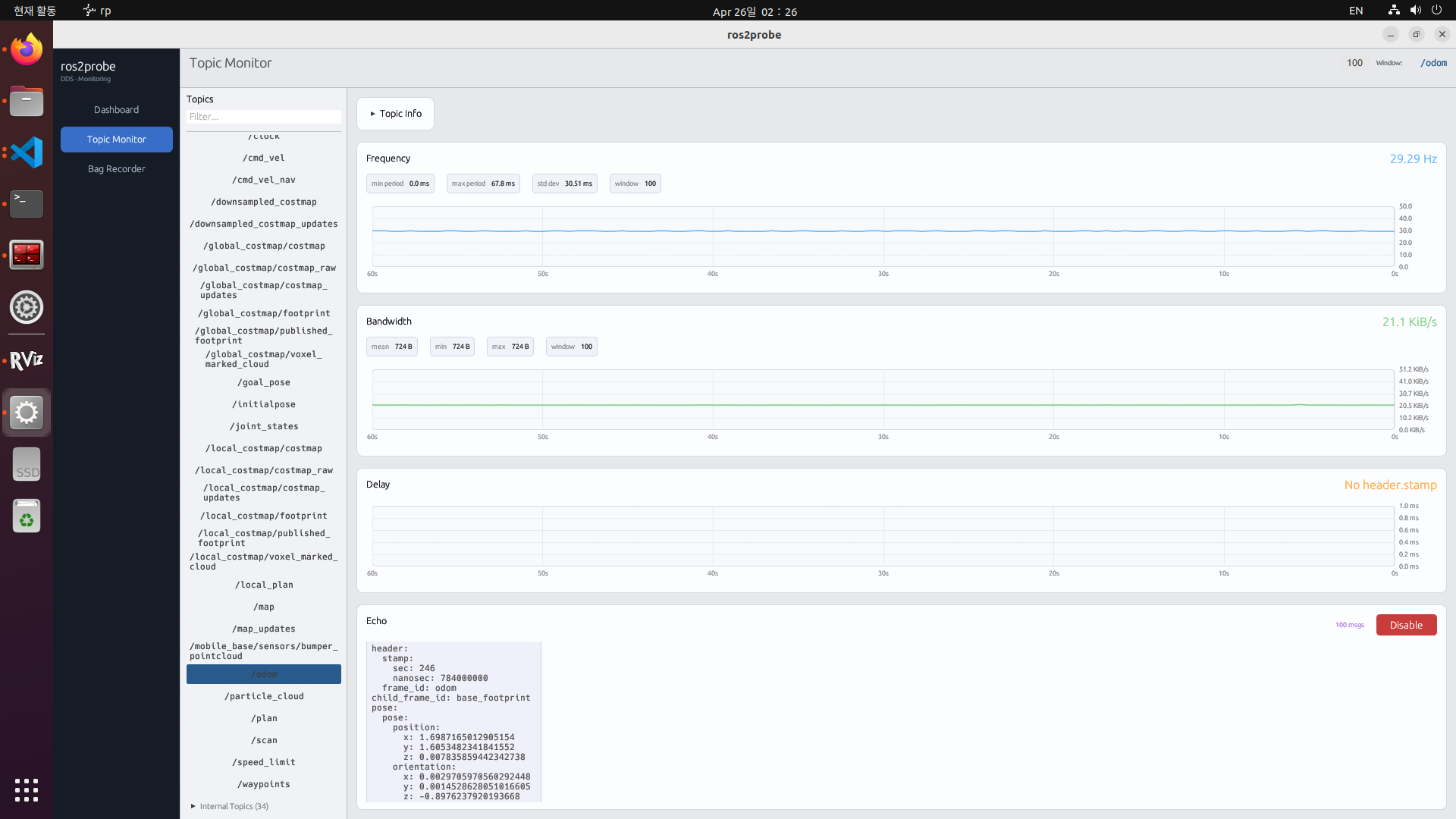

rp gui → Topic Monitor 2

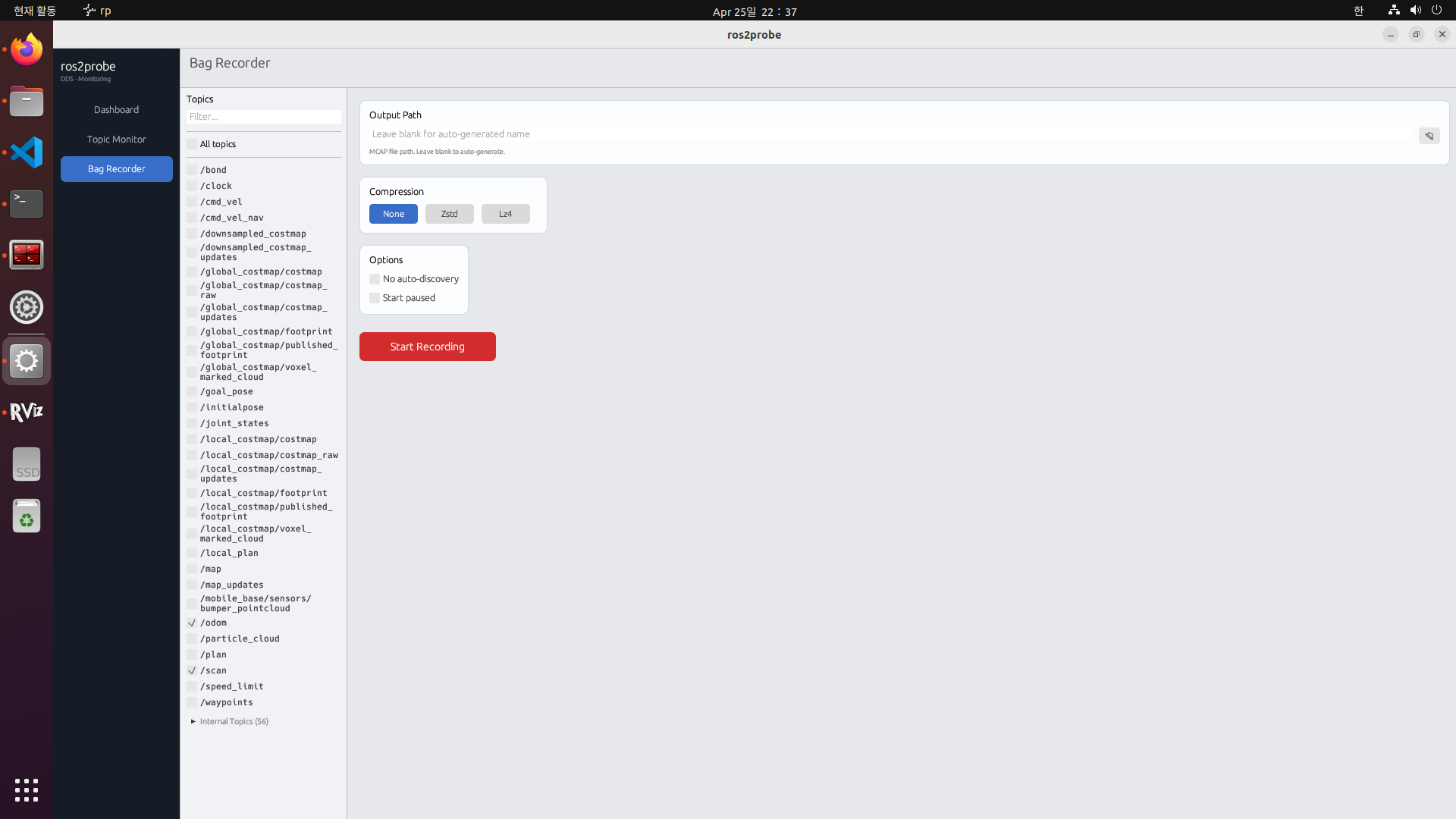

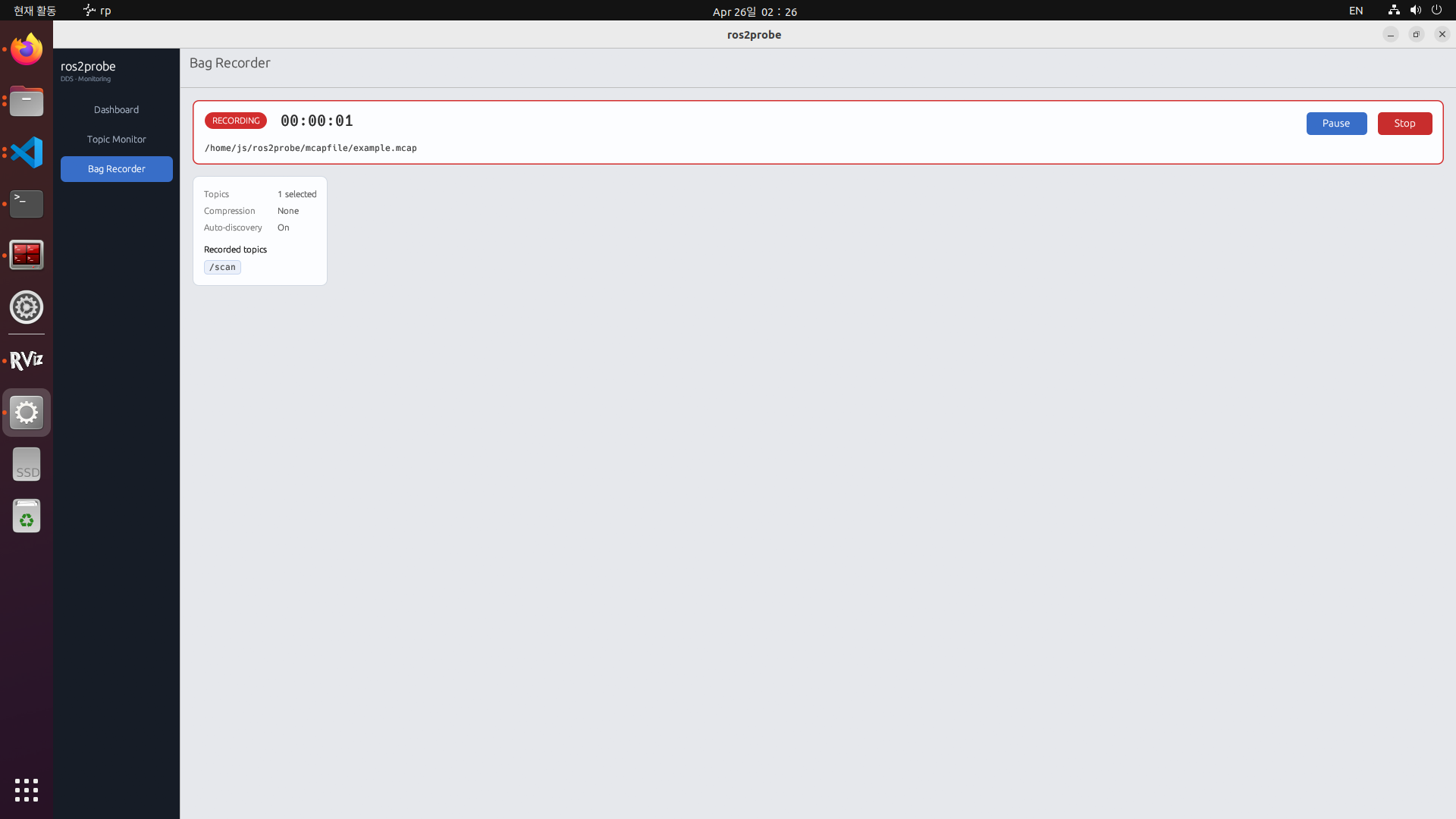

rp gui → Bag Recorder 1

rp gui → Bag Recorder 2

CLI at a glance

| Command | Description |

|---|---|

| rp run | Start the runtime daemon (auto-escalates to root if needed) |

| rp gui | Launch the desktop GUI |

| rp topic list | List topics with recordable / internal separation |

| rp topic hz <topic> | Live publish rate (mean / min / max / stddev) |

| rp topic bw <topic> | Live bandwidth |

| rp topic delay <topic> | End-to-end delay from header.stamp |

| rp topic echo <topic> | Stream decoded messages (rclpy-backed) |

| rp bag record [TOPICS…] | Record MCAP bag · zstd / lz4 / none |

| rp node list · info | Discovered nodes and their endpoints |

| rp discover | Force ros_discovery_info broadcast (SHM-aware shadow mode) |